RAID, the final frontier. These are the voyages of…ah, who am I kidding? Choosing to implement RAID today isn’t embarking on a mission to boldly go where no one has gone before. This technology has literally been around for years, and today RAID support can be found within most operating systems, integrated on motherboards, or easily added to any system as a 3rd party controller for almost nothing (quick search on Amazon yields controllers for less than $30). With its wide availability, you may find yourself at some point contemplating taking the plunge and configuring a RAID setup on your own system. However, before doing so, there are some things you should consider.

1. Pre-Planning

Before getting started, it’s good to ask yourself some basic questions. Such as:

- What type of data will be stored on the RAID volume?

- What applications will be accessing or running on the RAID volume?

- Is performance, redundancy, or a combination of both important to you?

Although basic in nature, having a general understanding of what you want to accomplish by configuring a RAID before you get started, will ensure the end result fits your needs and minimizes potential surprises.

2. Drives

- Drives should be the same formatted capacity.

- Depending on your RAID controller, if drives of different capacities are used, the final capacity of your RAID volume could be reduced.

- For example: A two drive RAID 0 consisting of a 10GB and a 20GB drive, would have a final capacity of 20GB (not 30GB), because the entire RAID is limited by the smallest member of the RAID.

- Additionally, in cases where different drive capacities are used, the unused drive capacity may be permanently unusable.

- Using the same example, in a two drive RAID 0 consisting of a 10GB and a 20GB drive with a total capacity of 20GB, the remaining 10GB of space on the 20GB drive not used by the RAID may not be accessible on some RAID controllers.

- Drives should be the same speed (spindle speed and/or transfer rate).

- In a RAID setup, drives inherently work together as a single volume; faster drives will be artificially limited by slower drives in the same RAID volume.

- Drives should be supported for use with RAID controllers.

- To create differentiation in the drive market, drive vendors release a wide variety of drives tailored for use in particular configurations. Unfortunately, the by-product of this is that many desktop drives are intentionally de-featured making them incompatible with RAID controllers. One example of this is the lack of TLER support. Feel free to read the WIKI page here, but essentially a drive with TLER disabled can go into an error recovery state long enough for a RAID controller to consider that drive offline. This causes the drive to drop and the volume suffers a premature drive failure (in a non-redundant volume this can be catastrophic).

3. Power

There is no magical rule of thumb or complicated configuration requirements with this one, it’s a simple case of drives require power and RAID requires multiple drives. It’s easy to get ahead of yourself here and purchase multiple drives for a particular RAID setup and neglect to consider the power consumption those drives will require. When drives don’t have sufficient power to operate properly, they can timeout, or disappear altogether. Sometimes this isn’t long enough to create a problem with a particular application or OS function, but RAID controllers are less tolerant. Unfortunately, the symptoms can easily be misdiagnosed as a cable, controller, or software problem and you can spend a lot of time (and money) trying to track down an issue that may ultimately end up being the power supply not being adequate for the hardware installed.

4. Cooling

Cooling is another area where there isn’t a clear guideline what is needed, as much as something to be mindful of when configuring a RAID setup. Traditional drives have spinning media that produces heat (not the case with Solid State Drives, or SSD), and RAID requires multiple drives. These two factors can easily create a cooling problem in many low end computer cases not designed with RAID in mind. Am I suggesting you rush out and purchase a “RAID certified” computer case? No (I am not even sure there is such a thing). All I am merely pointing out here is that cooling can quickly become a factor if not something considered. Drives subjected to high heat, due to insufficient cooling, can have much shorter life spans leading to premature drive failures. No one implements RAID to increase their chances of a drive failure, failing to accommodate for cooling requirements can lead to exactly that.

5. UPS

The unfortunate reality is that many of us do not have a UPS (Uninterruptable power supply) at home. Despite this truth, we all seem to survive (for the most part) just fine without them. However, when it comes to RAID setups, your chances of data corruption or loss is much higher with an unexpected power loss. Many RAID controllers use some form of cache to enhance write performance. Data that momentarily resides in cache (waiting to be written to the physical drives) is at risk, because cache is inherently non-volatile and will clear with loss of power. Unfortunately, disabling write cache to avoid this risk isn’t generally feasible as write performance can be significantly impacted. So in cases where data protection is paramount, you should consider purchasing a UPS or looking at RAID controllers that offer integrated cache protection (i.e. battery backup).

6. RAID level

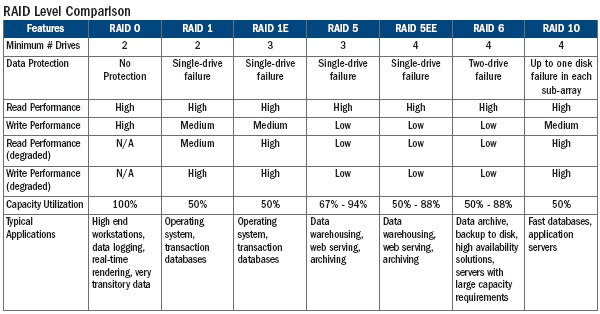

I won’t dive into all of the various RAID levels, as there are many and availability can be dependent on number of drives you have, controller you use, and the intended use of the RAID volume. Suffice to say, it is here where you will make one of your more pivotal configuration decisions. It is important to fall back to the answers you came up with during the pre-planning portion of this list. The overall driving force behind your RAID implementation should be why you want/need it. After that, you need to decide on a raid level that best fits your needs.

|

One area many people forget to consider when choosing a RAID level is how things will run in the event of a drive failure. One of the many reasons to implement RAID is to add some level of redundancy that allows operation (and more importantly data) to continue in the event of a drive failure. However, when the RAID volume is in this degraded state you may continue to operate without data loss, but sometimes at the expense of performance (such is the case with parity based RAID levels). Some applications can be very intolerant of slow performing volumes and may not run at all (i.e. SQL Server).

7. Performance

There isn’t a lot of performance tweaks that can be made to RAID volumes, but depending on which RAID level you choose there may be additional configuration settings that can play a role in overall performance. With stripe based RAID levels, data is striped across multiple drives. When configuring a stripe based RAID, you generally have some configuration control over the size of the stripe/cluster/chunk that is written to each drive. Choosing the best size isn’t an exact science, but depending on what you intend to use the RAID volume for, the rule of thumb is 128k for general use and higher if you’re writing or working with large data files. It would be worth feeding the Google machine some of your intended use specifics and stripe size, and see what the general consensus is. This is yet another point in which a little pre-planning can help nail down a RAID configuration best suited for your specific needs.

Certain RAID levels can offer performance gains over single drives, but sometimes at the expense of capacity or increased risk. The simple reality is the more drives in a single RAID volume the more moving parts (in the case with spinning drives), and the subsequent increase in risk. If during your pre-planning you identified performance being most important to you, then you may be willing to accept a bit of risk for increased performance. You just need to be aware of that going in, so there aren’t any unfortunate surprises.

SSD drives can be used in RAID volumes, but at the loss of TRIM support. Although this is changing, current RAID controllers do not support TRIM. Aside from longevity concerns, the performance degradation due to the lack of TRIM has been an obstacle really preventing SSD drives from being used in RAID

8. Back-ups

The importance of backing up your data is stressed so many times; it sadly begins to lose its relevance. Take that, and combine it with the false sense of security that a redundant RAID level can give someone, and you can quickly find yourself researching data recovery services. It’s very common to hear someone say “I don’t need to back up, I have RAID”. Well, where RAID can add a layer of redundancy, it’s not a viable replacement for data back-ups…period. Redundancy doesn’t protect against viruses, malware, crashes, hackers, power outages, etc… There are many ways your data can be damaged or lost, and RAID offers nothing to combat that in many cases. Simply put, RAID should enhance your overall data protection efforts, not replace.

9. Maintenance

Once a RAID volume has been properly configured, there isn’t much in the way of maintenance. However, if your RAID controller has any type of management software that offers email notification or event monitoring capabilities, you should take advantage of those. It’s not all that uncommon for you to experience a drive failure in a redundant RAID volume and not be aware of it. That is likely one of the reasons you implemented RAID in the first place. If this occurs and you continue to run in a degraded state, then your RAID is no longer fault tolerant and any subsequent drive failures will mean permanent data loss. So, it’s in your best interest to leverage any and all capabilities your particular RAID controller may offer in monitoring the ongoing health of your RAID volumes.

10. Risk vs. Reward

If you’re still with me and have read through the items listed here, it’s completely acceptable to conclude that RAID may not be right for your particular setup. RAID can be a powerful technology to leverage in certain configurations, but in others it can add unnecessary risk and overhead with very little reward. For example, in a RAID 0 consisting of two x 1TB drives, if you experience a single drive failure you lose 2TB of data not just the 1TB you would have lost had you not had your drives in a RAID 0. The performance gain from a two drive RAID 0 can be small in some cases, but the risk can be huge. For most home users, RAID falls under the classification of “just because you can do something, doesn’t mean you should”. Just because your particular OS or motherboard offers RAID capabilities, doesn’t mean you’ll benefit from those capabilities. Consider the points listed here, and then decide if RAID is right for you.

In my self-built “high-end gaming/home workstation” (HD Video/Audio work), put together over the past few months and obviously necessitating a large amount of storage, both FAST storage and redundant storage (and thus the clear benefits offered by RAID), I have:

– 1x Crucial M4 128GB SATAIII SSD as a drive cache

– RAID0 array (480GB) of 2x 240GB Corsair ForceGT3 (SATAIII SSDs) for the main OS and most commonly used programs

– RAID0 array (1.2TB) of 2x 600GB WD Velociraptor 10,000rpm 64MB-Cache (SATAIII) Hard Drives acting as “intermediary” drives between SSD’s/storage and for programs that require huge space and fast speed

– RAID10 array (2TB) of 4x 1TB WD RE4 7.2krpm/64MB-Cache (SATAIII) Hard Drives for “secure” storage

– RAID1 array (3TB) of 2x 3TB Seagate F4 7.2krpm/64MB-Cache (SATAIII) Hard Drives for backups

– RAID0 array (1.5TB) of 3x 500GB WD Caviar Black 64MB-Cache (SATAIII) Hard Drives for miscellaneous stuff as the drives came from a previous build

– 5-Drive JBOD array (1.35TB) of one each: 320GB Samsung Spinpoint, 500GB WD Caviar Blue, 160 WD Caviar SE, 120GB Hitachi Deskstar, 250GB WD Caviar Blue (all drives from past builds; all 100% healthy)

– RAID0 array (3TB) of 4x 750GB Seagate Momentus XT Hybrid Drives (work great for editing due to the total 32GB of NAND flash between the 4 drives allowing current projects to load instantly without taking space on the SSD arrays)

The drives are controlled by the following:

– Rampage IV Extreme On-Board 4x SATA6Gbps and 4x SATA3Gbps Ports

– Intel Dual-Processor 1GB DDR3 Cache PCI-E x8 Hardware RAID (SAS/SATA3) Controller x12 SATA6Gbps Ports

– Battery Backup for Intel RAID Card

– Promise Single-CPU 1GB DDR3 Cache PCI-E x4 Hardware RAID (SAS/SATA3) Controller x8 SATA6Gbps Ports

– Battery Backup for Promise RAID Card

I had to buy the extended bottom for my Case Labs TH10 case (which already has the 85mm extended top housing 3x 480mm radiators); now I have a total of 12x 3.5″ drive bays in the main chassis of which 3 hold SSD’s, and now a total of 24 more 3.5″ bays in the extended bottom with room for another 32… Plus, there are 2x “Slim” Blu-Ray Readers/Burners and 2x Slim Blu-Ray Readers/DVD-Burners taking up 2 total 5.25 bays.

AND IT’S NOT EVEN CRAMPED!

But, my point is… I have never had a drive fail and cripple me in 10 years (and lots of RAID arrays) because so long as you plan ahead, think smart, and don’t forget to add redundancy even for performance arrays… You won’t ever lose anything!

(Plus, I have 2x 8-Bay RAID NAS’s, one filled with 2TB Caviar Greens in RAID50 for 12TB of total storage, the other filled with WD RE4 2TB’s in RAID10 for 8TB of crazy-fast gigabit-ethernet storage)

nleksan,

First, I must commend you on your self-built workstation, as I have to believe that your particular setup exceeds what most end users would/could deploy on their own systems. Reading through your particular config, I think you’re illustrating alot of the points I made in my original post and I thank you for your time in showing a good real world example of when done right, RAID can be valuable benefit.