The Past

The world of Central Processing Units (CPUs) is an interesting one, driven largely by the competition between AMD and Intel and in some cases, Via. It is strange to see how, over time, people’s expectations of CPUs have changed. In recent years it has become apparent that we will never be satisfied, that no processor is “fast enough”. As soon as a new processor is released, consumers find a way to push its limits, and this “need for speed” is what keeps companies like AMD and Intel going. The interesting thing here is how the quest for speed, and the means of attaining it have changed over time.

Early in the game, the manufacturing technology was not a major driver of the change in processors, the focus was on the architecture. The leading style for PC applications was x86, and the competition between AMD and Intel was to make the best x86 processor possible. At the time, they didn’t have the luxury of hundreds of billions of transistors per chip, they had to pick and choose their battles, and attempt to predict what would be most important to the consumer.

At that time, clock speed was what everyone looked for to determine the top CPU. Intel’s Pentium processor debuted with a clock speed of 66 MHz, which seems like nothing now, but was nearly triple that of its predecessor. The emphasis on clock speed grew dramatically as Intel released its Pentium 2, 3, and 4 models at 300 MHz, 500 MHz, and 1.5 GHz respectively. Now we are all so used to clock speeds in the Gigahertz range that we have forgotten how recently we broke that barrier.

The Present

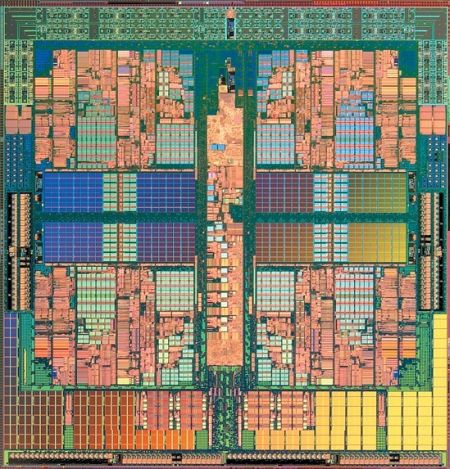

Now consumers not only want to do things fast, they want to do a lot of things fast, at once, and this has driven a change in the industry. The processor had reached the point where its progression was beginning to slow, and the resolution was dual-core, allowing users to multitask much more efficiently. Now we are seeing quad-core in desktop applications and significantly more cores in server applications. The only way that this became possible was through major advances in process technology, by shrinking the size of the transistor, CPUs could have exponentially more of them. This allows for not only more powerful cores, but for multiple cores to be included in one package.

Intel has been a major driver of process technology, largely because of its leading edge fabrication plants (fabs), and large R&D budget. They released the first 45nm CPU in 2007 and AMD followed shortly thereafter. Now that AMD has spun off its fabs into a new, independent company GlobalFoundries, expect more competition from them in driving process technology.

The Future

With CPUs trending towards more cores and less power, especially for laptops and netbooks, look for quick advances in manufacturing technology. It was only 2 years between 65nm and 45nm, and I would expect that 32nm processors are not far away. With a new technology and smaller transistors, look for the next wave of processors to have much faster clocks, more cores (on average), and longer battery life in mobile applications. Could the next wave of 8 Cored 32nm processors be right around the deveoping corner? Every time it appears that Intel is unbeatable, AMD has showed the thought behind their name (Advanced Micro Devices) and shocked Intel, time and time again, . Look for AMD to quickly close the gap that Intel has created, and possibly beat them to the 32nm punch. And it could all go down sooner than we all think…

What have you Heard?

The simple fact is that multi-core cpu were in the market — mostly used by business community. The only reason Intel, and AMD pushed it into the commercial PC is quite obvious — they couldn’t build 4GHz CPU. There was not a trend — furthermore to this day Intel, and AMD deny that they have hit the speed wall.

This is why we don’t see multicore 20ghz cpu — say each core are around 5ghz… The idea that there is no legit application for such massive power is absurd. We didn’t need 2ghz computer to run XP, let alone windows 2000, but they were there b/c companies could produce them.

5ghz multi-core cpu are not in the market b/c Moor’s law died 5 years ago. Even he admitted it…

Sometimes one just can’t believe what you’d see on a piece. This been somehow exemplary, although I have no intention to disagree on the perspective, only that I tend to think the scope of it may be off.

Let me try to explain what I mean. Anybody who is familiar with Kai Hwang’s book would know there’s a plethora of exotic design of CPUs in the early days. Heck, the dual issue IBM 360, is it S series or something which is exactly the early incarnation of hyper-threading and multi-core? The other heavy use of contemporary is pipelines. Other than these, aren’t there any other good ideas?

How about systolic, or data-flow, or the general MIMP arena? Yeah, these are all dead ideas for the time being, for various reasons, but I would be the first to balk that our imagination is limited to Intel’s innovation plan, as this piece would lead to excitement and over-joy of our collective bright future!

Given there are very good reasons why we arrive where we are today, in which this business requires very expensive investment, with a great risk, it is a very difficult business to be in. Hence, I can understand current Intel’s direction and conservatism. However, as an uncommitted Intel user, I don’t have to agree everything Intel has to sell. And would be suspicious of anyone who is leading the Intel architecture only fantasy tomorrow, which is as far as I concern, very dull, and unspectacular.

Ok, that been said, I think I want to make it clear, that this is not an Intel bashing podium. I say what I say mainly to point out this limited imagination, and narrow perception of human capability is not serving mankind, and it is my intention and understanding that I should have point that out.

I think both of you have valid points. I think that argument also is based highly on who you consider to be the “user”. If you consider the actual consumer to be the user, then yes, the change was highly manufacturer driven. If you consider the OEMs like HP and Dell to be the user, then you could argue that the “user” played a large role in the transition as well.

The interesting thing here is that the process has been a cyclic one. CPUs hit a wall in clock speed, so they began with Hyperthreading and Multicores. This change has driven the need to change in manufacturing technology. That change has made everything much smaller, transistors especially. Now with smaller transistors, it is enabling high clock speeds again.

If you take a look at our review of the CyberPowerPC at http://www.techwarelabs.com/cyberpowerpc-gamer-xtreme-si/ you can see that includes an Intel processor overclocked to about 3.7GHz, with simple liquid cooling. With more extreme cooling kits this could most likely be pushed past 4GHz. I also expect the CPUs that will be released in the near future, especially those that are of 32nm technology, to be sporting higher clocks than anything we have seen yet.

A lot of what both of you have said is true. I am very excited to see what the near future will hold for CPUs.

I agree with everything you have said but I see it slightly differently. The need for multi-core CPU’s was a natural evolution of hyperthreading and multiprocessing which came about years before 4GHz was even a target or a reality. As a result of multiprocessing and hyperthreading software writers created programs to take advantage of multiple cpu’s and thus consumers began to seek multiple CPU’s in their regular machines at an affordable rate. Thus was born the dual core, and quad core CPUs and all that will follow.

Hi Tom,

good article. Just wanted to clear some things up.

The 486 DX2 CPU, which came out a year before the first Pentium, actually sported clockspeeds of 40MHz, 50MHz, 66MHz and 100MHz. Even the first 486, launched 1989, managed 50MHz in its fastest form.

Also, the general consensus amongst those in the know is that if Intel and AMD had not ran into serious power requirement and cooling issues when trying to get their CPUs to scale to 4GHz (which is incidentally why there are no 4GHz CPUs until this day), we would still be using single-core CPUs.

The multi-core CPU was a way to circumnavigate the issues that came with high clockspeed while adding computational power. At first, of course, there were very few applications that could even make use of the second core, and most home users still do not run two CPU-intensive applications at the same time.

So basically, the need for the multi-core CPU was not user-driven, but manufacturer-driven. What else could they have done to keep up with Moore’s law, if the CPUs could not be made to work at 4GHz speeds while maintaining power and thermal envelopes that were considered sensible?

Spectacular! I’m your #1 Fan, Tom!

Nice article, excellent view on the shape of CPU and processing. What do you guys think?

Yea I liked it, but I beg to differ on the 66MHz being the 1st Intel CPU, because I have an Intel 33MHz CPU on my desk (Using as a paperweight), but, still a good read, Thanks.

John, I probably could have made this more clear in the article, but the 66 MHz is in reference to the first processor in the Pentium family. You are correct, there were many more processors before that with lower clock speeds.